There are so many unknowns for early-stage companies. There is constant pivoting, with a rising number of stakeholders. With so many differing opinions, ideas, and hypotheses on how your company should run and how your product should be modified, you couldn’t hope to try them all.

But what if you could?

Building an experimentation culture is key for early-stage companies as they build the foundation of your culture and how your team approaches problem-solving.

A strong experimentation culture gives your team the courage to embrace the unknown. When failure is no longer feared but embraced to become your greatest resource for learning, it is when an experimentation culture is truly at work.

There are many other benefits of such a culture. It can:

- Keep the team constantly learning and communicating.

- Ultimately, it helps you determine the best way to delight your customers.

- Eliminate HIPPO (Highest paid person opinion) thinking, allowing everyone to contribute ideas that can be tested, validated, or proven otherwise.

Experimentation can be put to work in many areas of your organization: your internal processes, your tech stack, or your go-to-market strategy. But the real money maker is your product, and that’s where the magic needs to happen.

A great way to introduce the experimentation mindset is to implement A/B testing in your product development playbook.

If you aren’t sure how to do that, no worries! We’ve got you. This is your guide to getting started with A/B testing! We will quickly explain the basics of A/B testing and then explore the who, what, where, when, why, and how.

It’s that easy, so let’s get started.

A/B Testing Defined:

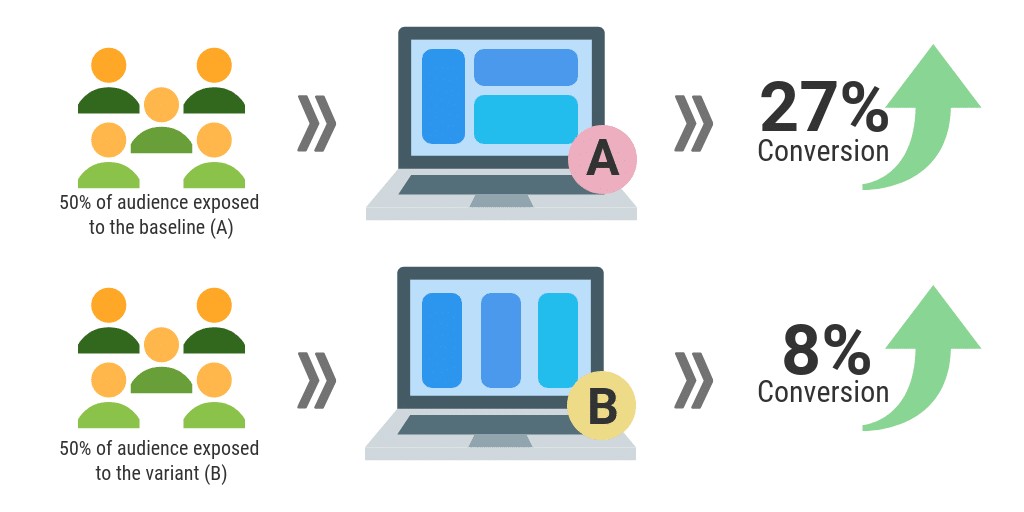

A/B testing runs two variations of your product simultaneously to identify which performs better. By testing two different user experiences with specified user groups, you can use users to determine which performs the best against your goals.

A/B Testing Breakdown:

- Get your baseline ready for testing- this is the current version of your web or app.

- Develop a hypothesis you want to test on the market with real customers.

- Create the variant with the changes needed based on your hypothesis.

- Segment your audience to determine who will be seeing each variation.

- Run the experiment to collect data and observe customer behavior.

- Analyze the results to determine the winning variant.

Here are the 5Ws of everything you need to know about A/B testing, with examples of ideas to try and inspiration from experimentation leading brands.

What Should You A/B Test?

The short answer is everything and anything! Any customer-facing element of a digital journey on your mobile app, website, or communication piece should get tested and validated by no one other than the customer. Here are four ideas of what you can get tested right away:

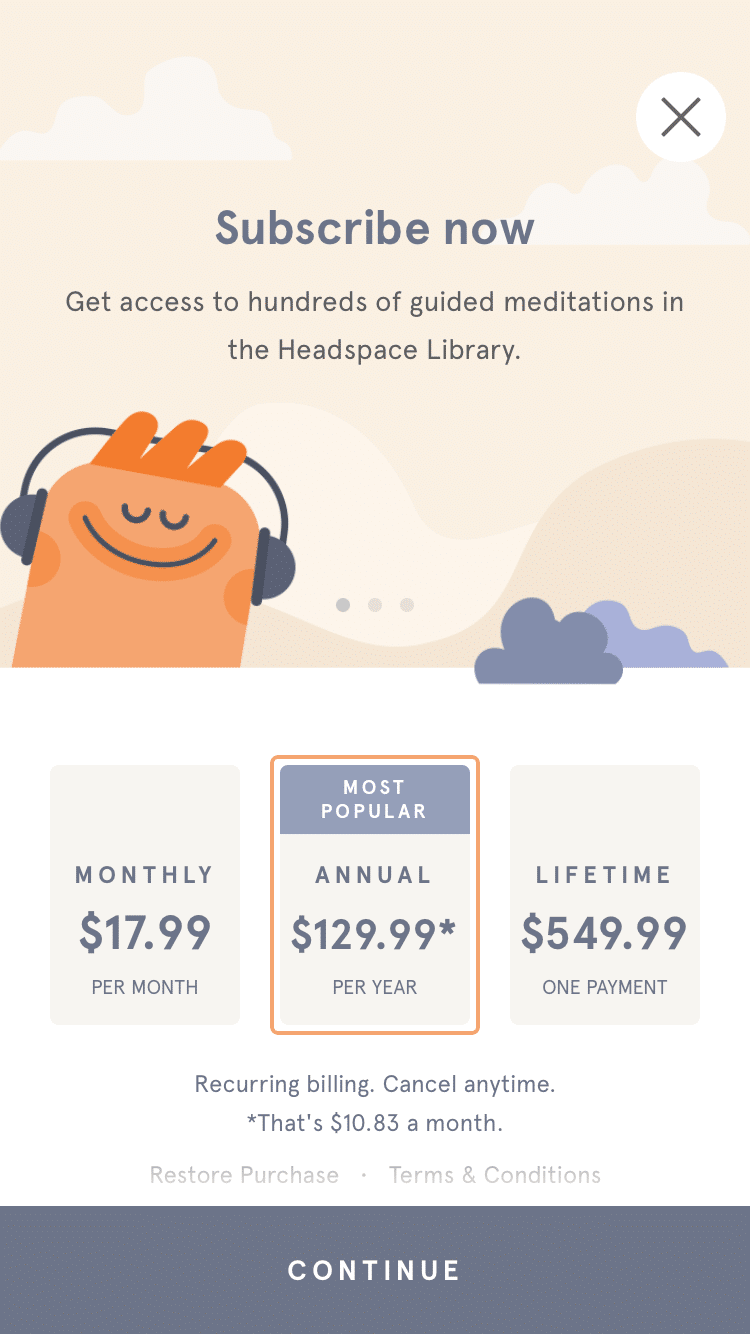

1. Payment/Subscription Layout

The popularity of the ‘try before you buy’ or freemium model has put conversion and user acquisition at the top of everyone’s mind lately. Therefore, payment and subscription layout have become the make or break factor for your retention and bottom line. This is your time to finally pop the question and ask your user to upgrade or fully commit to you, so a lot is riding on this.

Looking at Headspace, they’ve highlighted their annual package in orange and deemed it to be the most popular out of the three. Distinguishing the most popular or best deals to the customer and syntax overall is something you could play around with to see if you can persuade your users to make that selection.

Key Takeaway: This strategy is called price anchoring and is just one of the psychological strategies you can test. Create a hypothesis around what would incentivize users to upgrade. Depending on your product offering, this could come from (pricing, timing, extra lives, or something else) – then get creative and test it out! You never know until you try.

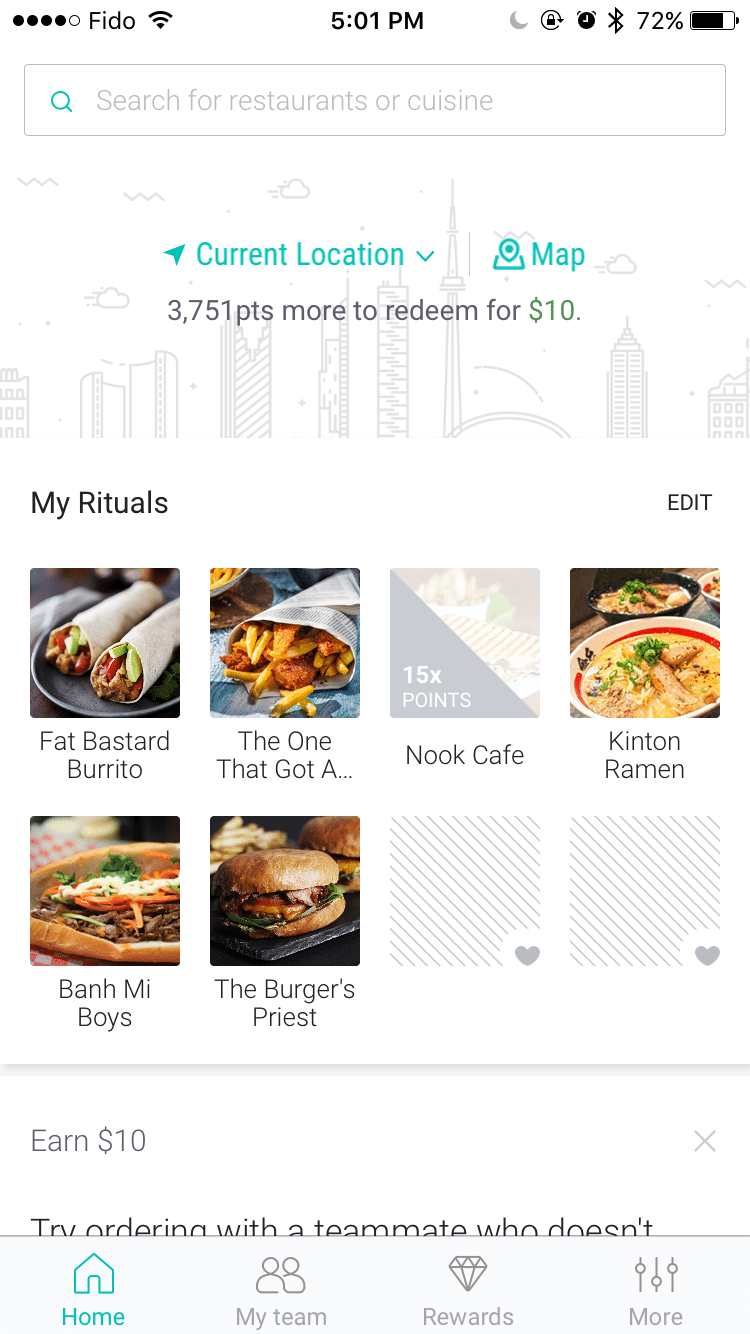

2. Personalization

Let’s face it: with so many options, it’s not about proving to customers that you’re special but making them feel special. Customers recognize and appreciate when companies go the extra mile to make the customer experience feel intimate but not intrusive.

Food ordering app Ritual ensures that your favorite restaurants are featured first every time you open the app. By reducing the friction in the process, the user can get to their favorites faster or get reminded of how quickly they can satisfy their cravings.

Key Takeaway: Show your users that you understand their needs by adding a personal touch. From the recommendations based on their past behavior to the messaging delivered in your emails or push notifications, you can never go wrong with making the experience more personal and contextual for the customer.

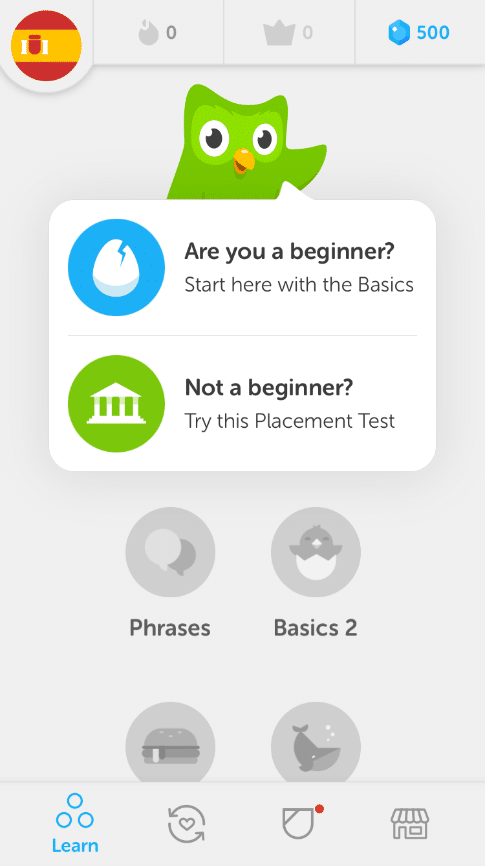

3. Onboarding Flows

First impressions matter! And in our digital world, the first impression lies in onboarding. This is where you develop trust with your user and prove your value. Customers can give up with just a click of a button nowadays, so it’s important to nail your first impression.

Duolingo’s owl mascot accompanies you during the onboarding process, which is an incredibly cute touch and puts a little more personality into the process. They have also chosen very upbeat and color graphics, which makes the task of signing up, which can be lengthy at times, less intimidating, and easy to understand.

Key Takeaway: Play around with fun characters and explore what graphics, language, number of steps, and amount of information required best eliminate friction during onboarding.

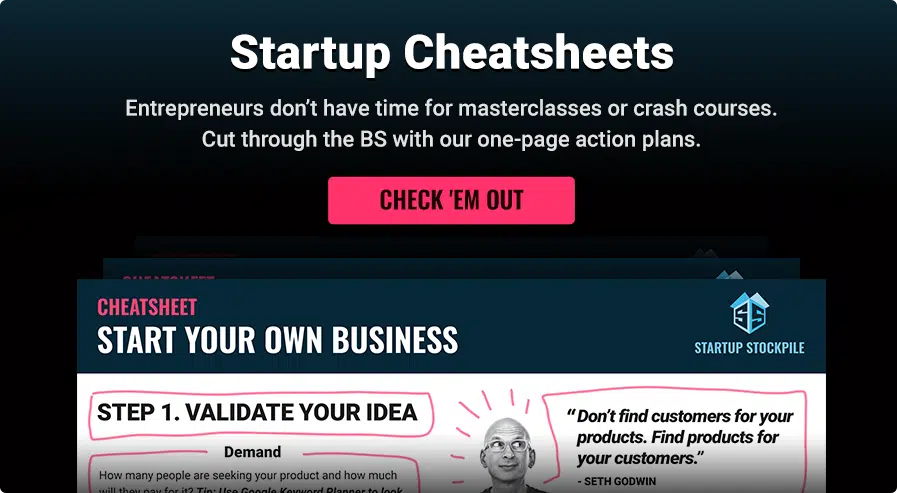

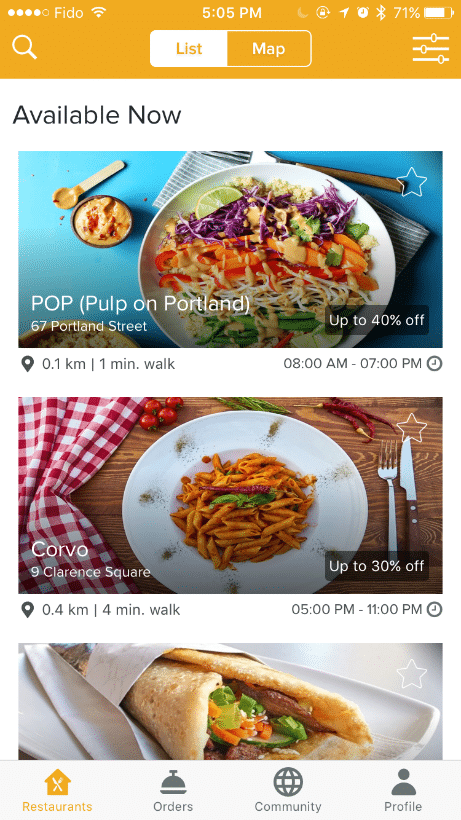

4. Page Layout

It’s not always about what you say but how you say it. People judge books by their covers and apps by their icons. The combination of graphics, colors, and formats you use to tell your story could turn off customers.

No matter how great your product is, if the UX/UI doesn’t resonate with users, they won’t ever see the magic behind your product.

Apps like Feedback go through multiple versions of their interface to ensure their users get the best experience. See below how they display all their offerings with bright, colorful pictures, and users can scroll downwards to see the other options, one by one.

Key Takeaway: You’re not restricted by just one column, motion, or menu layout. Play around with a horizontal scroll, multiple columns, or thumbnails. It’s your job to discover what makes the experiences as simple and pleasant as possible for your users.

Bottom line: Whether you have a mobile app or an online store, if you’re not A/B testing, you’re flying blind.

Who is A/B Testing?

Whether you’re a small startup or a huge corporation, companies of all sizes are desperate to stay on top of the rapidly evolving needs and expectations of their customers, who are digitally savvy and insanely impatient.

Two industry leaders have found comfort in tackling this issue through rigorous A/B testing.

Booking.com has been a champion in the A/B testing space and has been reaping its benefits for over eight years. The company holds conversion rates well above the industry average (almost triple the success rate!) and attributes all of its success to its constant testing.

Instead of using their time in lengthy discussions debating the what-ifs, they get results they need to execute quickly. The company believes in “guidelines, not rules, ” enabling it to run more than 1000 experiments simultaneously. Their strong experimentation culture that has complete buy-in gives them immense clarity.

And then there’s Netflix.

It’s a whole new experience every time you open up Netflix, thanks to their relentless desire to discover what pleases their viewers the most. Everything from layout to sign-in to content features is tested repeatedly.

The most notable of their experiments are the changes in their artwork. User engagement is too important of a metric for Netflix to leave up to chance, so they test the relationship between all the content artwork and its contribution to user engagement.

By comparing the app’s click-through rate and time spent, Netflix can conclude with science and consumer behavior what will best optimize the overall customer experience.

When to A/B Test?

Unless there’s a decision that you can make with 100% certainty, companies can and should always be A/B testing to continuously iterate their product to adhere to changing user needs. Implementing experimentation in every step reaffirms your commitment to growth, but it requires an internal commitment to an experimentation culture. By framing failures and discoveries as lessons, you’ll be empowered to optimize the user experience.

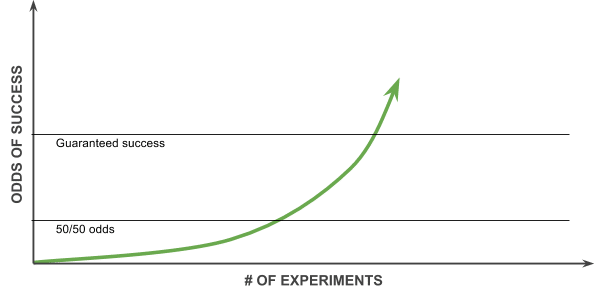

It’s no wonder Facebook has advocated for testing and even adopted the 10,000 Experiment Rule. Many experiments have allowed them to take small bets to secure big wins. They test every theory and assumption for a more informed and data-driven team to validate each idea.

Instead of deliberate practice, which often involves lengthy debates and discussions, they encourage deliberate experimentation. Getting results quickly allows them to learn and move at the same pace as the market, which some may say is their secret to staying on top.

Why All the Fuss?

A/B testing is powerful because you can validate your ideas against real-user data. It helps mitigate the risk of putting all your eggs in one basket as the anti-HIPPO decision-making trend rises. A/B testing gives companies the gift of choice, an open mind, and, most importantly, real growth.

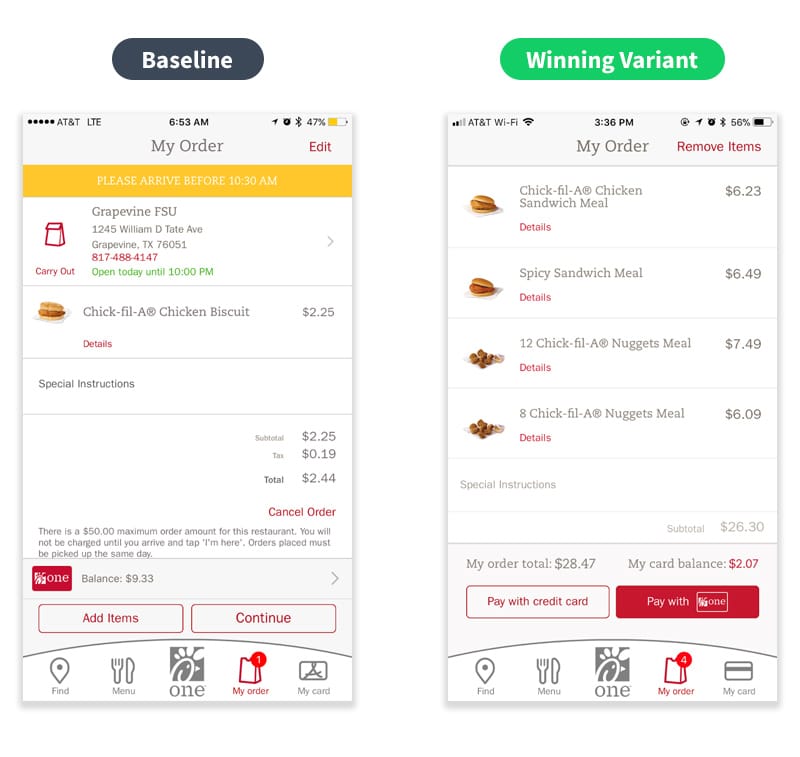

Chick-fil-A’s journey with A/B testing demonstrates why it has become an integral part of business in this digital age. Originally, their app’s misleading UX led customers to believe that they could only pay with the company’s loyalty card, which hit their cart conversions.

Once they decided to test new layouts and CTAs, credit card payments increased by 6%, and customer service inquiries hit zero within just one month! A simple change was able to drive app conversions and solve what could have been a million-dollar problem.

How Do You A/B Test?

A/B testing can get complicated, time-consuming, and expensive. But it doesn’t have to be. You can give your development team simple tools like website heatmaps or comprehensive testing applications like Taplytics to make the A/B testing process faster and easier to understand.

At Taplytics, we’ve taught hundreds of companies how to create experiments to understand and grow their user base. Our customer success team helps you identify the levers in your product to grow your user base, drive conversions, retain users, and empower you to make data-informed decisions.

So, if you’re excited to get a jumpstart on A/B testing, Taplytics can have you running your first experiment within 30 days.

Ready, Set, Test!

There are many avenues and ideas to explore, but this shouldn’t scare you. It should excite you! Especially at startups, A/B testing lets you explore new ideas and validate decisions that will help you optimize your customer experience.

Harness all these possibilities so that you can reach your full potential.

Remember:

- Nothing is off limits- test everything and anything.

- It’s always a good time to test.

- Move purposefully – get the data you need to make the hard decisions easier.

Editor’s Note: This article is part of the startup tools blog series Start Your Business brought to you by the marketing team at Unitel, the virtual phone system priced and designed for startups and small business owners.